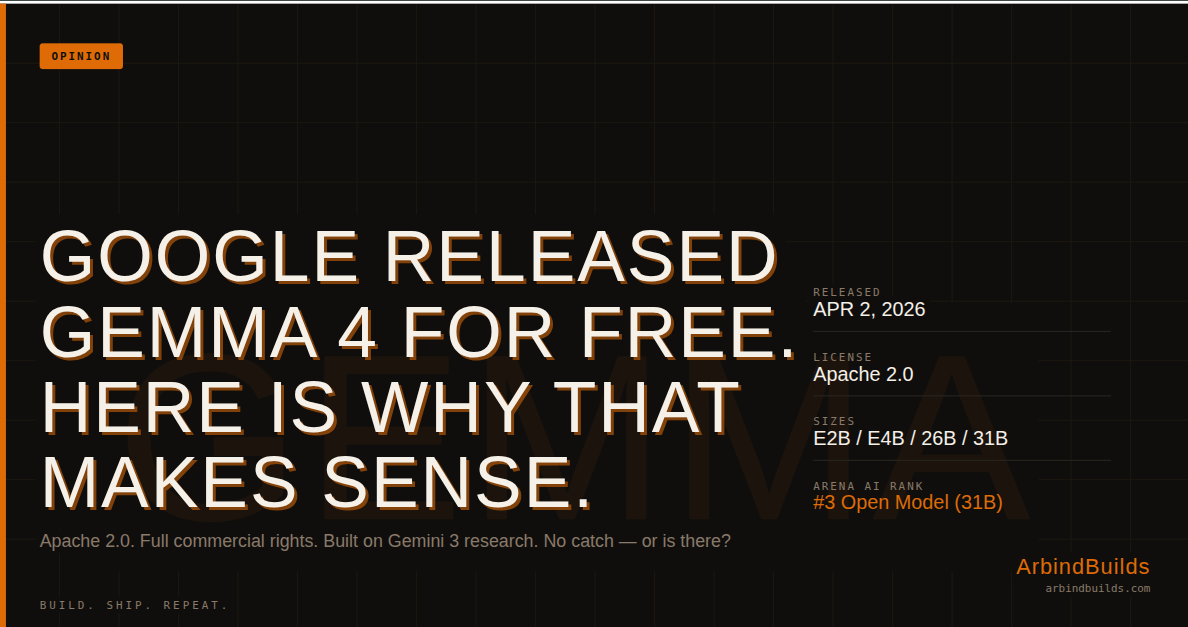

Google Released Gemma 4 for Free. Here Is Why That Makes Sense.

Gemma 4 dropped April 2, 2026 under Apache 2.0 with full commercial rights. This is what the architecture actually does and what Google is really after.

On April 2, 2026, Google released four AI models. You can download them, run them locally, fine-tune them on your own data, ship products built on top of them, and charge customers for those products. No usage fees. No per-token billing. No reporting requirements back to Google. Just a standard Apache 2.0 license and a Hugging Face page.

That is a strange move for one of the biggest technology companies on earth. Google has profitable API services. They have Google Cloud. They have Gemini. So why are they handing out a model built on the same research as Gemini 3, completely free, with full commercial rights?

There is a reason. Once you see it, the whole release makes sense.

What Gemma 4 Actually Is

When you call the Gemini API, your prompt leaves your machine, travels to Google's servers, gets processed on their GPUs, and the response comes back. You pay per token in and per token out. Your laptop is just a terminal. The actual computation happens somewhere else, on hardware you do not control.

Gemma works differently. You download the model weights once. Those weights contain everything the model learned during training, frozen into a file on your disk. After that, everything runs locally. Your CPU, your GPU, your RAM. No internet needed, no API call, no Google server involved.

This is the same reason Llama from Meta became so widely used. Meta released their weights openly, and within days the community had tools like Ollama running a capable model on a MacBook with one terminal command. Gemma 4 works with the same ecosystem. If you already use Ollama, getting started is one line:

# 26B MoE — the sweet spot for most developers

ollama run gemma4

# 31B Dense — maximum quality, needs ~24GB VRAM

ollama run gemma4:31b

Running local AI is not a new idea. Llama has been running locally for over two years. Mistral, Phi, all of these ran locally. What is new with Gemma 4 is the quality of what you can now run locally. The gap between cloud models and local models just got significantly smaller.

The Four Models and What Makes Them Interesting

Gemma 4 ships in four sizes: E2B, E4B, 26B, and 31B. The naming tells you something about the architecture inside each one.

E2B and E4B: Per-Layer Embeddings

The "E" stands for "effective" parameters. These two edge models use a technique called Per-Layer Embeddings (PLE). In a standard transformer, a token gets converted into a vector and that same vector flows through every decoder layer unchanged. Think of it as one ID badge that gets checked at every floor of a building, whether or not that floor cares about the details on it.

PLE gives each decoder layer its own small secondary embedding for every token. Each layer gets a richer, more specific signal about what it is processing. The model does not need to be as wide or as deep to produce good output, because each layer starts with better information.

The practical result: the E2B runs in under 1.5 GB of RAM. Most smartphone apps take more space. And this model understands text, images, and audio, works in over 140 languages, and runs completely offline. That is a real number worth sitting with.

Comments

Lovable Leaks Source Code: The $6.6B BOLA Vulnerability

An 8 million user platform ignored a critical BOLA vulnerability for 48 days. How a $6.6B AI app builder leaked source code, credentials, and user data.

Kubernetes vs Docker: Stop Comparing the Wrong Things

Docker builds containers. Kubernetes runs them at scale. They're not rivals and picking the wrong mental model for each costs you months of overhead.

Claude Code Free Unlimited Setup with OpenCode Zen and Minimax M2.5

Run Claude Code for free using OpenCode Zen and Minimax M2.5 — no GPU, no region lock, no hard limits. Step-by-step setup for Mac, Linux, and Windows.

Tagged